The role of whitebox in a WISP/FISP MPLS core

Whitebox, if you aren’t familiar with it, is the idea of separating the network operating system and switching hardware into commodity elements that can be purchased separately. There was a good overview on whitebox in this StubArea51.net article a while back if you’re looking for some background.

Lately, I’ve had a number of clients interested in deploying IP Infusion with either Dell, Agema or Edge Core switches to build an MPLS core architecture in lieu of an L2 ring deployment via ERPs. Add to that a production deployment of Cumulus Linux and Edge Core that I’ve been working on building out and it’s been a great year for whitebox.

There are a number of articles written that extoll the virtues of whitebox for web scale companies, large service providers and big enterprises. However, not much has been written on how whitebox can help smaller Tier 2 and 3 ISPs – especially Wireless ISPs (WISPs) and Fiber ISPs (FISPs).

And the line between those types of ISPs gets more blurry by the day as WISPs are heavily getting into fiber and FISPs are getting into last mile RF. Some of the most successful ISPs I consult for tend to be a bit of a hybrid between WISP and FISP.

The goal of any ISP stakeholder whether large or small should be getting the lowest cost per port for any network platform (while maintaining the same level of service – or even better) so that service offerings can be improved or expanded without being required to pass the financial burden down to the end subscriber.

Whitebox is well positioned to aid ISPs in attaining that goal.

Whitebox vs. Traditional Vendor

Whitebox is rapidly gaining traction and working towards becoming the new status quo in networking. The days of proprietary hardware as the dominant force are numbered. Correspondingly, the extremely high R&D/manufacturing cost that is passed along to customers also seems to be in jeopardy for mainstream vendors like Cisco and Juniper.

Here are a few of the advantages that whitebox has for Tier2 and 3 ISPs:

- Cost – it is not uncommon to find 48 ports of 10 gig and 4 ports of 40 gig on a new whitebox switch with licensing for under $10k. Comparable deployments in Cisco, Juniper, Brocade, etc typically exceed that number by a factor of 3 or more.

- SDN and NFV – Open standards and development are at the heart of the SDN and NFV movement, so it’s no surprise that whitebox vendors are knee deep in SDN and NFV solutions. Because whitebox operating systems are modular, less cluttered and have built in hardware abstraction, SDN and NFV actually become much easier to implement.

- No graymarket penalty – Because the operating system and hardware are separate, there isn’t an issue with obtaining hardware from the graymarket and then going to get a license with support. While the cost of the hardware brand new is still incredibly affordable, some ISPs leverage the graymarket to expand when faced with limited financial resources.

- Stability – whitebox operating systems tend to implement open standards protocols and stick to mainstream use cases. The lack of proprietary corner case features allows the development teams for a whitebox NOS to be more thorough about testing for stability, interop and fixing bugs.

- Focus on software – One of the benefits that comes from separating hardware and software for network equipment is a singular focus on software development instead of having to jump though hoops to support hundreds of platforms that sometimes have a very short product lifecycle. This is probably the single greatest challenge traditional vendors face in producing high quality software.

- ISSU – Often touted as a competitive advantage by the likes of Cisco and others, In Service Software Upgrade (ISSU ) is now supported by some whitebox NOS vendors.

IP Infusion

IP Infusion (IPI) first got on my radar about 2 years ago when I was working through a POC for Cumulus Linux and just getting my feet wet in understanding the world of whitebox. What struck me as unique about them is that IP Infusion has been writing code for protocol stacks and modular network operating systems (ZebOS) for the last 20 years – essentially making them a seasoned veteran in turning out stable code for a NOS. As the commodity hardware movement started gathering steam, IP Infusion took all of the knowledge and experience from ZebOS and created OcNOS, which is a platform that is compatible with ONIE switches.

Earlier this year, I attended Networking Field Day 14 (NFD14) as a delegate and was pleasantly surprised to learn that IP Infusion presented at Networking Field Day 15 (NFD15) back in April. I highly recommend watching all of the NFD15 videos on IP Infusion, as you’d be hard pressed to find a better technical deep dive on IPI anywhere else. Some of the technical and background content here is taken from the video sessions at NFD15.

Background

- Has its roots in GNU Zebra routing engine

- Strong adherence to standards-based protocol implementations

- Original white label NOS ZebOS has been around for 20+ years and is used by companies like F5, Fortinet and Citrix

Advantages

- Very service provider focused with advanced feature sets for BGP/MPLS

- OcNOS benefits from 20 years of white label NOS development and according to IP Infusion’s marketing material is reputed to have “six 9’s” of stability as observed by their larger ISP customers.

- Perpetual licensing – once the license is purchased, the only recurring cost is the annual maintenance which is a much smaller fee (typically around 15% of the license)

- Extensive API support – IPI has extensive API support for protocols like BGP to facilitate integration of automation and orchestration.

- Easier hardware abstractions than proprietary NOS – look for chassis based whitebox and form factors beyond 1U in the future

- Increased focus on the 1 Gbps switch market with Broadcom’s incredibly feature rich Qumran chipset so that Start-up and very small ISPs can still leverage the benefits of whitebox. Also, larger Tier 2 and 3 ISPs will have a switching solution for edge, aggregation and customer CPE needs.

Integrating OcNOS with MikroTik/Ubiquiti

I’ve specifically listed IP Infusion instead of doing a more in depth comparison of all the various whitebox operating systems, because IP Infusion is really positioned to be the best choice for Tier 2 and 3 ISPs due to the available feature set and modular approach to building protocol support. Going a step further, it’s a natural fit for ISPs that are running MikroTik or Ubiquiti as the OcNOS operating system fills in many of the gaps in protocol support (MPLS TE and FRR especially) that are needed when building an MPLS core for a rapidly expanding ISP.

While I’ve successfully built MPLS into many ISPs with MikroTik and Ubiquiti and continue to do so, there is a scaling limit that most ISPs eventually hit and need to start using ASIC based hardware with the ability to design comprehensive traffic engineering policies.

The good news is that MikroTik and Ubiquiti still have a role to play when building a whitebox core. Both work very well as MPLS PE routers that can be attached to the IP Infusion MPLS core. Last mile services can then be delivered in a very cost effective way leveraging technologies like VPLS or L3VPN.

Other Whitebox NOS offerings

There are a number of other whitebox network operating systems to choose from. Although the focus has been on IPI due to the feature set, Cumulus Linux and Big Switch are both great options if you have a need to deploy data center services.

Cumulus Linux is also rapidly working on developing and putting MPLS and more advanced routing protocol support into the operating system and it wouldn’t surprise me if they become more of a contender in the ISP arena in the next few years.

This actually touches on one of the other great benefits of whitebox. You can stock a common switch and put the operating system on that best fits the use case.

For example, the Dell S4048-ON switch (48x10gig,4x40gig) can be used for IPI, Cumulus Linux and Big Switch depending on the feature set required.

Some ISPs are getting into or already run cloud and colocation services in their data centers. If a compatible whitebox switch is used then stocking replacement hardware and operational maintenance of the ISP and Data Center portions of the network become far simpler.

Design elements of a WISP/FISP based on a whitebox MPLS core

Here are some examples of the most common elements we are trending towards as we start building WISPs and FISPs on a whitebox foundation coupled with other common low cost vendors like MikroTik and Ubiquiti.

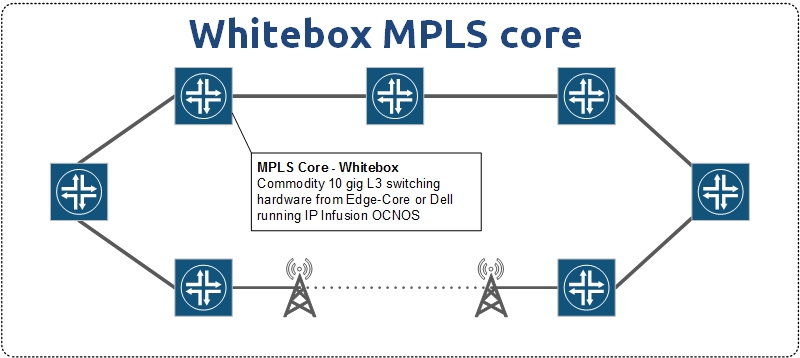

Whitebox MPLS Core

As ISPs grow, the core tends to move from pure routers to Layer 3 switches to be able to better support higher speeds and take advantage of technologies like dark fiber and DWDM/CWDM to increase speeds. Many smaller ISPs are starting to compete using the “Google Fiber” model of selling 1Gbps synchronous to residential customers and need the extra capacity to handle that traffic.

MPLS support on ASICs has traditionally been extremely expensive with costs soaring as the port speeds increase from 1 gig to 10 gig and 40 gig. And yet MPLS is a fundamental requirement for the multi-tenancy needs of an ISP.

Leveraging whitebox hardware allows for MPLS switching in hardware at 10, 40 and 100 gig speeds for a fraction of the cost of vendors like Cisco and Juniper.

This allows ISPs to utilize dark fiber, wave and 10Gig+ layer 2 services in more cost effective way to increase the overall capacity of the core.

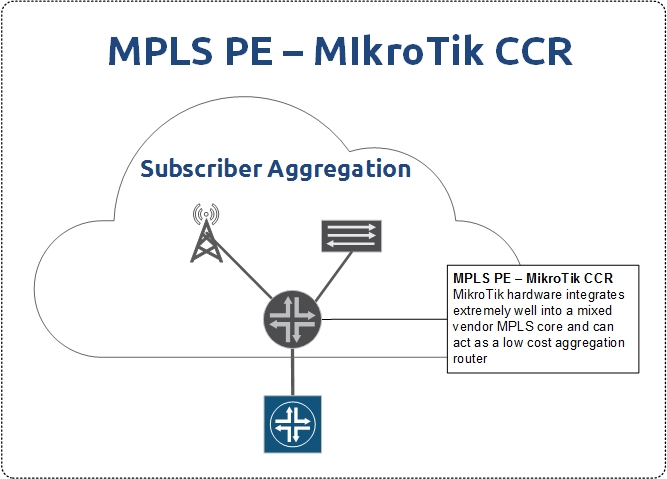

MPLS PE for Aggregation

MikroTik and Ubiquiti both have hardware with economical MPLS feature sets that work well as an MPLS PE. Having said that, I give MikroTik the edge here as Ubiquiti has only recently implemented MPLS and is still working on expanding the feature set.

MikroTik in contrast has had MPLS in play for a long time and is a very solid choice when aggregation and PE services are needed. The CCR series in particular has been very popular and stable as a PE router.

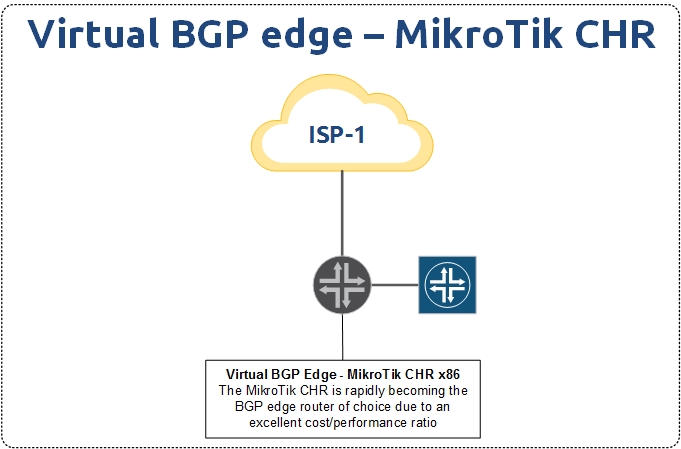

Virtual BGP Edge

MikroTik has made great strides in the high performance virtual market with the introduction of the Cloud Hosted Router (CHR) a little over a year ago.

Due to the current limitation of the MikroTik kernel to only using one processor for BGP, there has been a trend towards using x86 hardware with much higher clock speed per core than the CCR series to handle the requirement of a full BGP table.

The CHR is able to process changes in the BGP table much faster as a result and doesn’t suffer from the slow convergence speeds that can happen on CCRs with a large number of full tables.

Couple that with license costs that max out at $200 USD for unlimited speeds and the CHR becomes incredibly attractive as the choice for an edge BGP router.

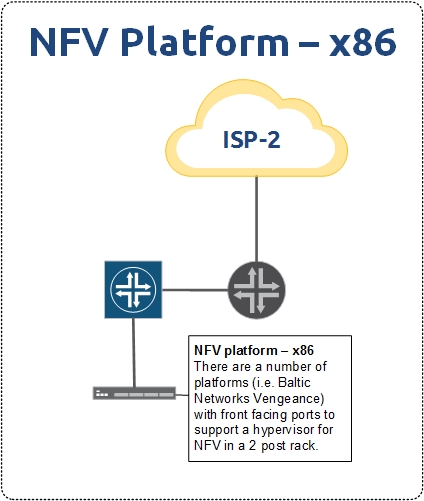

NFV platform

Network Function Virtualization (NFV) has been getting a lot of press lately as more and more ISPs are turning to hypervisors to spin up resources that would traditionally be handled in purpose built hardware. NFV allows for more generic hardware deployments of hypervisors and switches so that more specific network functions can be handed virtually.

Some examples are:

- BGP Edge routers (smiliar to the previous BGP CHR use case)

- BRAS for PPPoE

- QoE engines

- EPC for LTE deployments

- Security devices like IPS/IDS and WAF

- MPLS PE routers

There are many ways to leverage x86 horsepower to get NFV into a WISP or FISP. One platform in particular that is gaining attention is Baltic Networks’ Vengeance router which runs VMWARE ESXi and can be used in a number of different NFV deployments.

We have been testing a Vengeance router in the StubArea51.net lab for several months and have seen very positive results. We will be doing a more in depth hardware review on that platform as a separate article in the future.

Closing thoughts

Whitebox is poised for rapid growth in the network world, as the climate is finally becoming favorable – even in larger companies – to use commodity hardware and not be entirely dependent on incumbent network vendors. This is already opening up a number of options for economical growth of ISPs in a platform that appears to be surpassing the larger vendors in reliability due to a more concentrated focus on software.

Commodity networking is here to stay and I look forward to the vast array of problems that it can solve as we build out the next generation of WISP and FISP networks.